[cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_custom_headline level=”h2″ looks_like=”h3″ accent=”false”]Perhaps to discover what our consciousness is we should try and create an artificial consciousness. So what would we need?

We need a few ingredients; a purpose, randomness, trial and error, genetic progression (evolution) and narrative building (boxing and seeing cause and effect).

[/x_custom_headline][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_columnize]We seem to solve problems by imagining scenarios and then possible solutions, trying each solution on the imagined stage and then discarding those that don’t work. Each time we imagine a new scenario and a new stage (situation) we make small random errors in our calculations. All natural things have a small element of unpredictability. This random element allows us to come up with solutions to problems that don’t even exist. From this we can extrapolate a narrative taking us down unknown paths to create whole new universes. This narrative is born from a logical transposition of causal relationships. See a soccer ball fly through the air when kicked and we assume other round object will fly through the air when kicked. So we can imagine kicking Mercury to the outer solar system if we were big enough.

But the process is rather simple. Encounter a problem; say getting from your bed to the fridge to eat. Note here that there must be an intrinsic purpose to drive invention. You move your legs from your bed in an attempt to stand and fall flat on your face. Pain is the result. You have not learnt to walk yet. But through a very quick process of trial and error you will both imagine and then trial in the real world a bunch of leg movements combined with hanging on to stuff to get you from your bed to the fridge. Once you have discovered walking you store the “program” away to retrieve later when ever you need to get from A to B. It does not require new thinking or trial and error, and will only be revised if it is found to fail, say walking on ice. You have created new code, the walking code.

[/x_columnize][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_raw_content][GARD][/x_raw_content][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_columnize]The same storage of solutions happens with moral and political ideas, that is why accepted frames are so difficult to dislodge. We economise our thinking to only those things that have no workable solution.

So to create this artificially we would need to give the computer an intrinsic purpose like the need for electricity, or making people happy. Then there would need to be a form of reward and punishment so thinking can be revised in response to small deviations from this need. For instance if the computer saw a person smile they would get a positive number and store what they were doing at the time as good. Then they could dissect all their previous actions and test them to see which action was considered good and try to replicate and develop it.

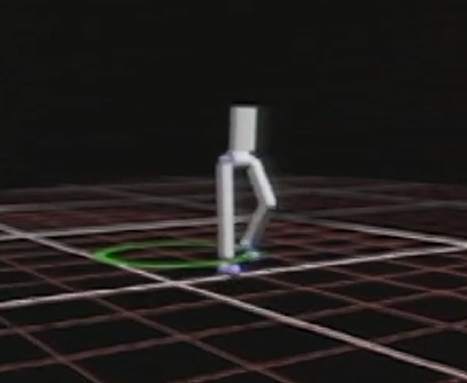

Evolutionary or genetic algorithms are showing that animated “people” can teach themselves to walk through a process of trial and error with randomness. And the weird thing is they look a lot like the way we walk. Not rigid and programmed but more fluid, with constant feedbacks and small adjustments, and the odd stumble and save. Evolutionary algorithms work by having many possible instructions often chosen at random, trying them all on a specific purpose and then combining those that work best, breeding them into a new set of instructions. What those in the field call Emergence. But with each generation small random errors are added to discover yet undiscovered solutions. Through this process of breeding the best fits with randomness and constantly altering the code the computer programs itself to solve the problem. Animated figures can be walking in 5-10 generations, running in a few more and playing soccer within 20. Imagine a brain (computer) doing this thinking, breeding of solutions covering 10 generations in milliseconds. That is how I imagine we think. We see a problem and can find a solution almost instantly because we can trial multiple solutions in a short period of time not even conscious that we are doing it, the consciousness comes a little later

[/x_columnize][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_image type=”none” src=”http://www.jesaurai.net/wp-content/uploads/2016/03/genetic-coded-walking.jpg” alt=”” link=”false” href=”#” title=”” target=”” info=”none” info_place=”top” info_trigger=”hover” info_content=””][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_columnize]In order to create the playground, the imagined landscape to test new ideas in our minds we need a memory and the ability to create a scene, to filling gaps with new info from this trial and error. And of course our computer will also have a memory.

Another part which is necessary to our thinking is self reflection (consciousness?). The ability to analyse our own decisions as if from an external view point. This is easy enough done in a computer we simply have multiple processors, one that deals with external input, and another that just analyses the decisions made by the first against a deeper purpose. And we could have a third which amends the internal long term narrative and purpose. A ghost in the machine so to speak. Actually we could create multiple levels, ten or more, of this analysis each reviewing and feeding back information to our external selves. This is a bit like the internal “Herman’s Head” like arguments we can often have with ourself, many characters all playing different roles playing out an internal dialogue. To make quick decisions we simply shut down the reviewing layers, to contemplate we switch all layers on absorbing and evolving.

Then we would need to give the computer down-time (sleep) so the self reflective systems can review all the generations of ideas, as they would all be stored in short term memory eventually filling it. This is what we would call feeling tired. Even though many ideas may have been found unnecessary for the immediate decision to be made they may come in handy for other problems, longer term ones or future possibilities and could be stored in long term memory for later use. Others deleted.

You can imagine how dreams are formed from this down-time. Unused ideas are tested against stored scenarios to see if they have benefit, narratives are formed and extrapolated and if a solution is found for a future event before unimagined it can be stored for later use. Small fragments of code or instruction can be attached and reattached to other fragments in new and old narratives creating crazy other worlds. From this we can prepare for the unknown, and our computer, or robot can to. It will be adapted to possible eventualities.

[/x_columnize][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_video_embed no_container=”false” type=”16:9″][/x_video_embed][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_columnize]So our consciousness is nothing more than a secondary reviewing processor, and there is good evidence from neurological testing to support this. Libet saw a pause between decision made and consciousness of the decision, and the pre-frontal cortex along with the orbital frontal cortex have this review and override function on our more base reward and punishment dopamine system.

Sounds simple doesn’t it. I think we have been going about computers all wrong, we have programmed every line of code in a very deterministic way and found that our errors still crash the system. We should build in adaptability, trial and error, purpose and randomness and let the machine find its own path. Exciting, and a little scary.

[/x_columnize][/cs_column][/cs_row][/cs_section][cs_section id=”” class=” ” style=”margin: 0px; padding: 45px 0px; ” visibility=”” parallax=”false”][cs_row id=”” class=” ” style=”margin: 0px auto; padding: 0px; ” visibility=”” inner_container=”true” marginless_columns=”false” bg_color=””][cs_column id=”” class=”” style=”padding: 0px; ” bg_color=”” fade=”false” fade_animation=”in” fade_animation_offset=”45px” fade_duration=”750″ type=”1/1″][x_raw_content][GARD][/x_raw_content][/cs_column][/cs_row][/cs_section]